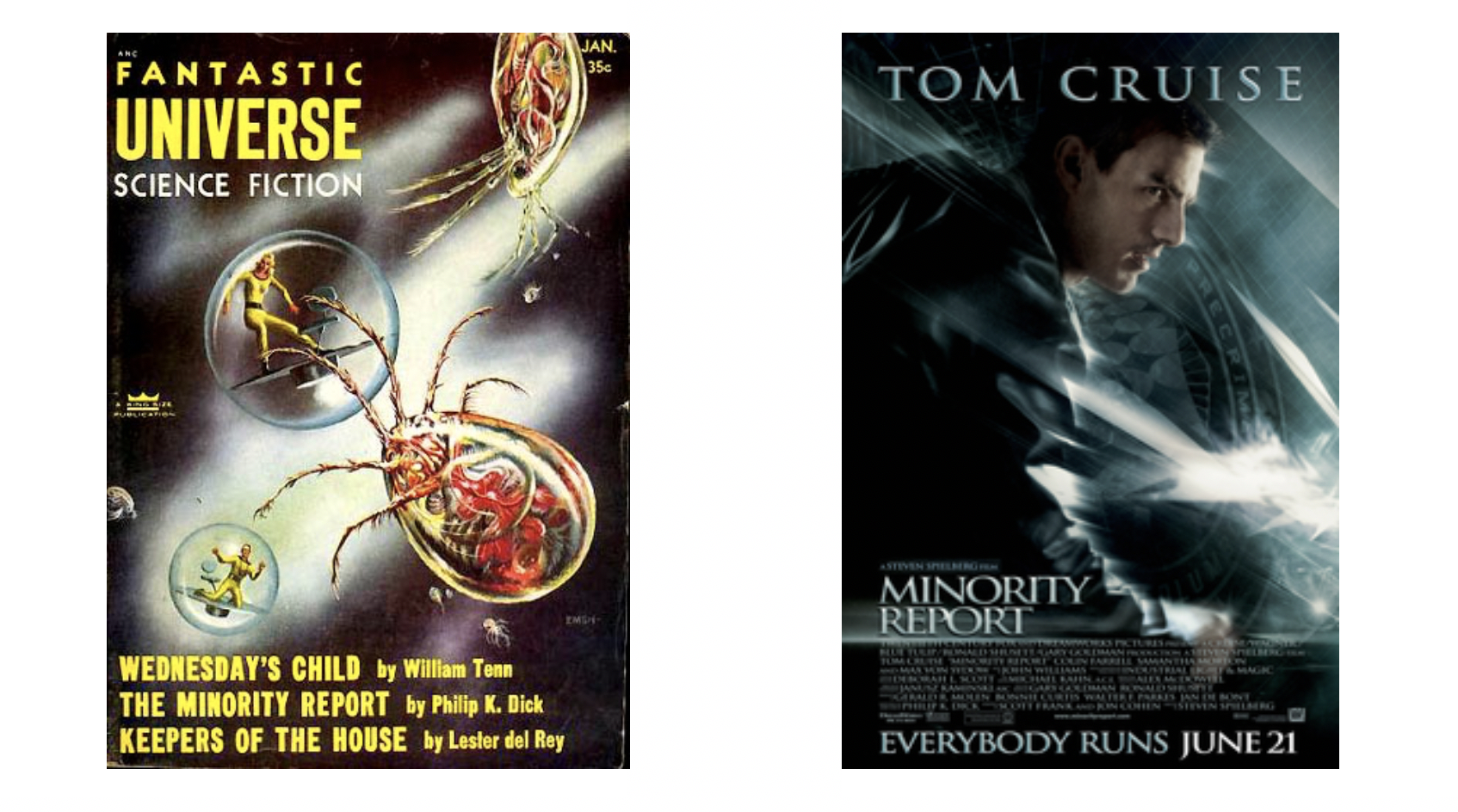

In 1956 American author Philip K. Dick published “The Minority Report”, a science fiction short story, in which a special Pre-Crime Division with several mutants can predict crimes before they occur.

In the beginning, the Chief of the Pre-Crime Division gets a report that he himself is predicted to become a murderer within the next week. Not knowing who to trust –privately, at work and in society – the protagonist struggles to find a balance between individual liberty and authority.

In 2002 Steven Spielberg directed the movie “Minority Report” with Tom Cruise as the main actor, loosely based upon Philip K. Dick’s short story from 50 years ago. Crimes are predicted using three mutated humans, called “Precogs”, who “pre-visualize” crime through visions they receive from the future. Would-be murderers are imprisoned in a benevolent virtual reality before they even have a chance to commit the crime. Meanwhile, the federal government is on the verge of adopting this controversial program nationwide. In the movie, Steven Spielberg imagines a citywide optical recognition system that Tom Cruise must avoid while walking through to avoid his detection and arrest.

What does all of this have to do with Artificial Intelligence (AI), Machine Learning (ML), Neural Networks (NN), and/or Natural Language Processing (NLP)?

A lot! All of these technologies were considered science fiction until the beginning of this millennium. Optical/facial recognition and real-time personalized advertisements have evolved and are being used now, and are ready for commercial use.

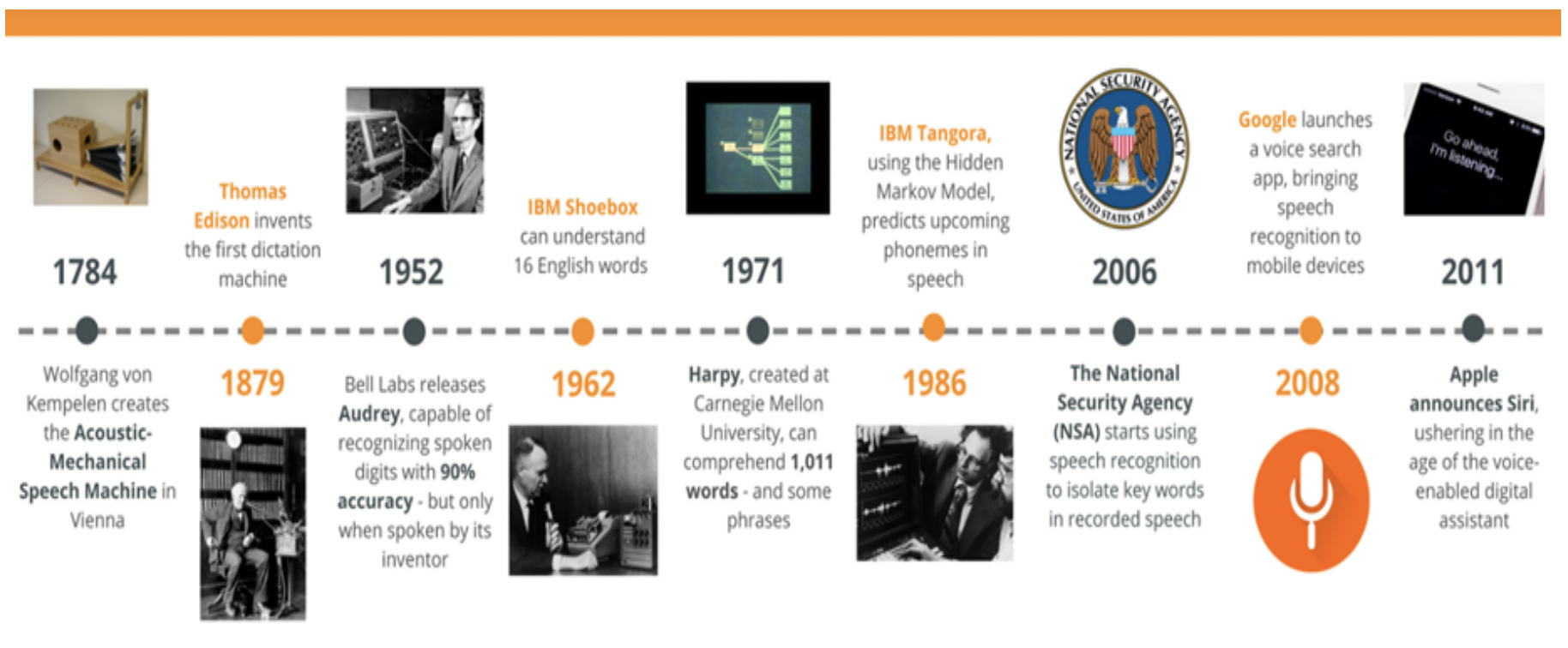

Here is a brief history of Artificial Intelligence:

In the summer of 1956, the Dartmouth Summer Research Project, organized by John McCarthy, was a workshop widely considered to be the brith place of Artificial Intelligence. The discussions held there covered many topics including the rise of symbolic methods, systems focused on limited domains seen as early expert systems, or deductive systems versus inductive systems. Many of these 1950’s “sci-fi” technologies that were being discussed at Dartmouth are being used by industry leading companies.

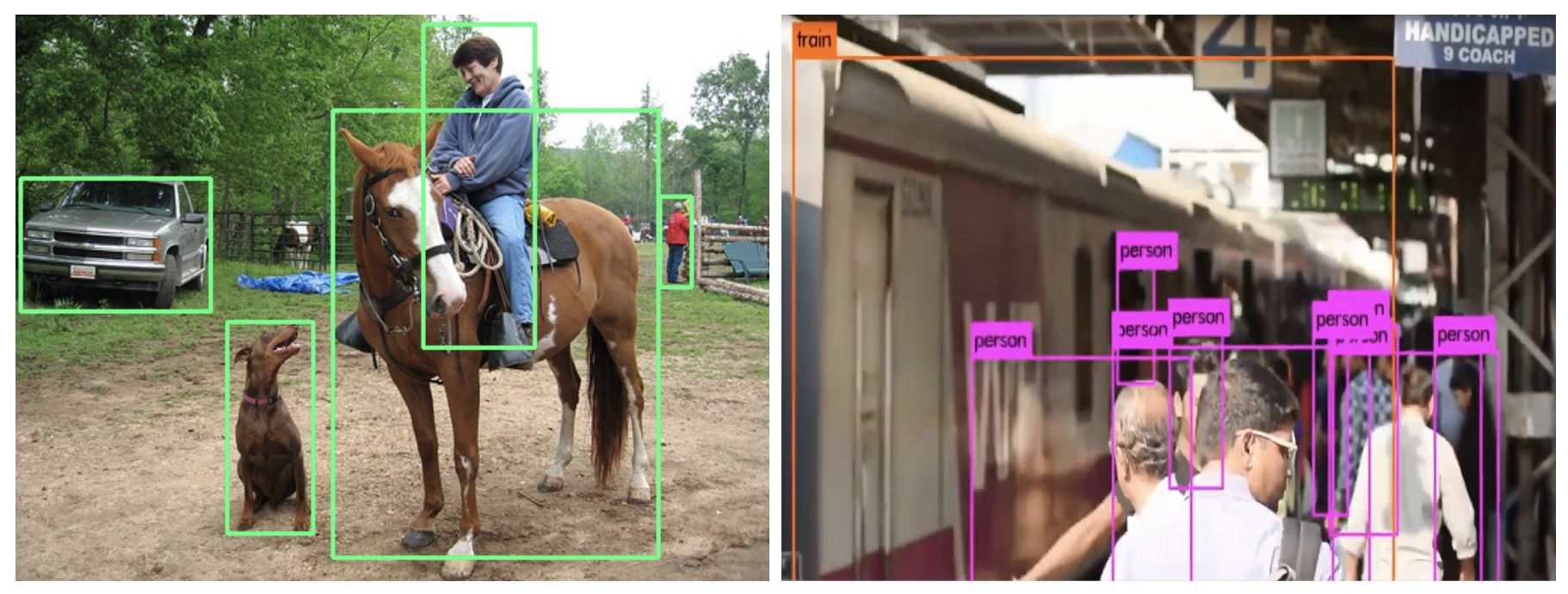

Take for instance Google’s TensorFlow framework for object source recognition (released November 2015 under Apache 2.0) started by Google’s Brian Team in 2011. TensorFlow is a machine learning system based on models implemented on deep learning neural networks. In principle, TensorFlow is a mathematical machine learning library used to train for object source recognition in real-time video streams or stored video recordings.

“Person”, “bicycle”, “car”, “motorbike”, “train”, “bus”, “airplane”, “truck” . These are all potential objects that can be analyzed via a live video stream and executed upon in real time.

In comparison to recognition algorithms, a detection algorithm does not only predict class labels but detects and tracks the location of those objects. Not only does it classify the image into a category, but it can also detect multiple objects within an Image. Such an algorithm divides an image into regions and bounding boxes for each region. The bounding boxes are then weighted by their predicted probabilities.

What all these technologies require is highly specialized domain expertise, low-level knowledge about the specific problem to be analyzed, and the right technology to make it all happen.

How do you put these special algorithms and services into the context of an End-2-End solution that is highly adaptable to incoming trends in your industry and is ready for real-time application meshing?

VANTIQ is the Answer

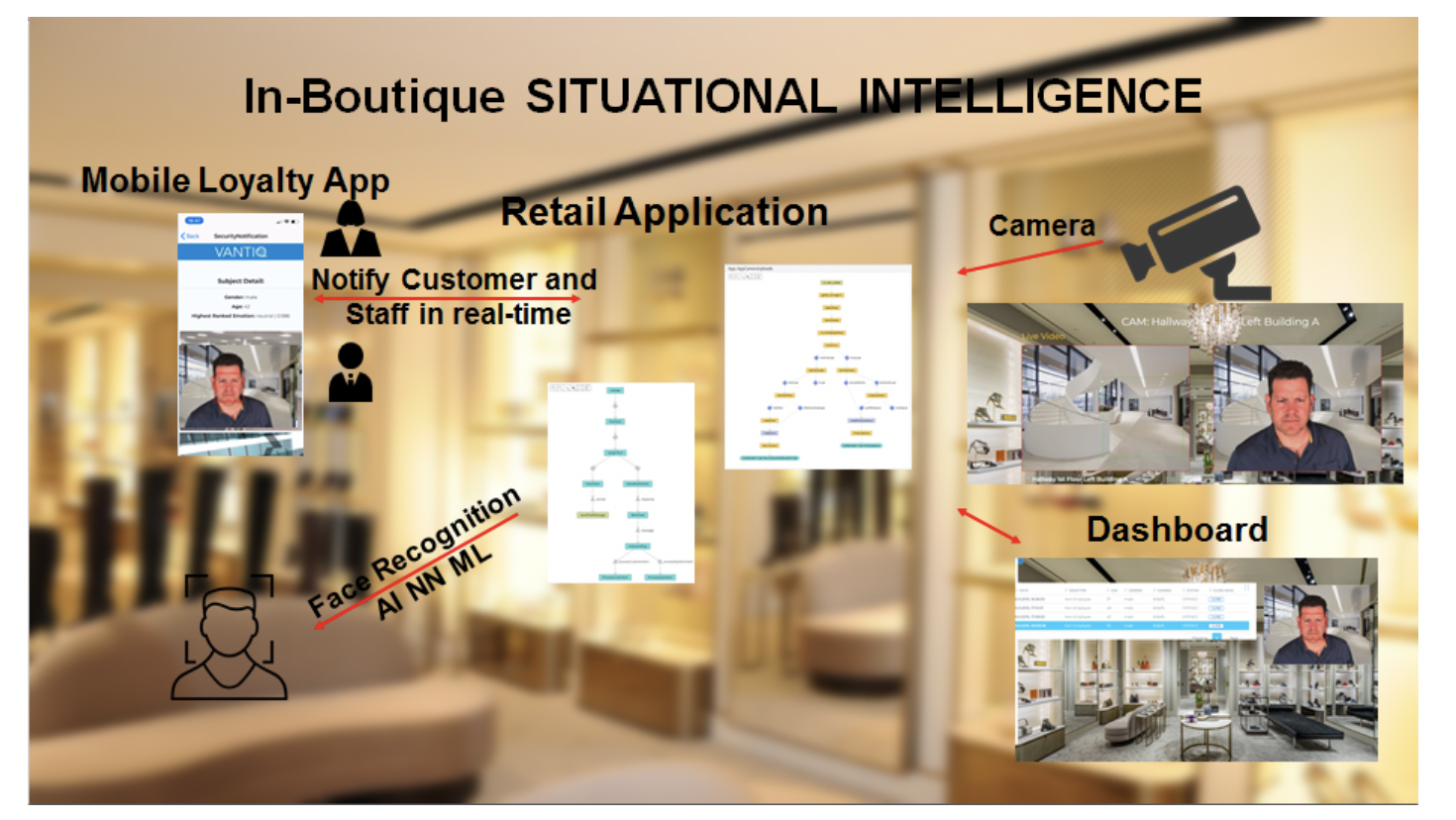

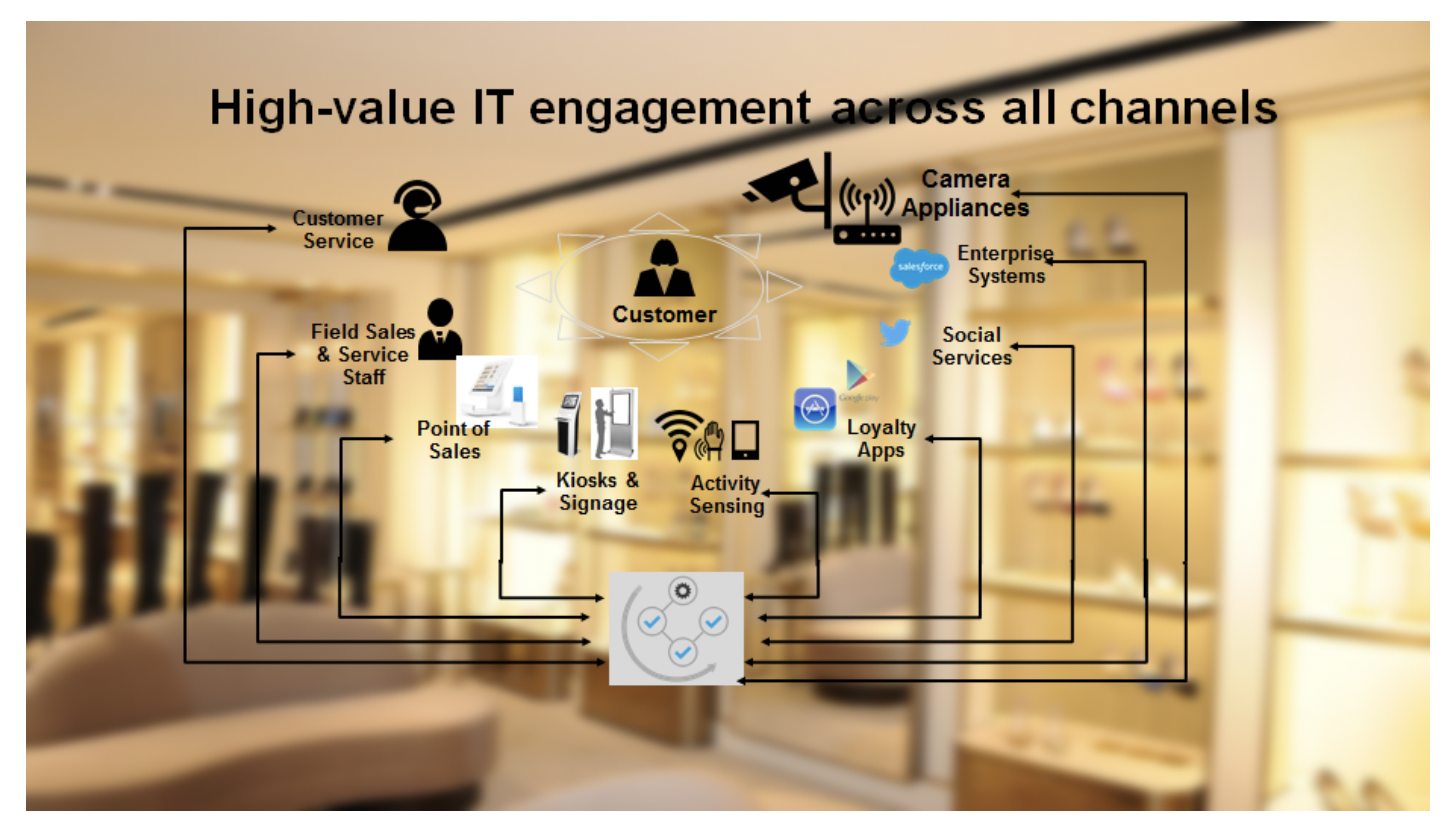

Take for instance the retail sector with an In-Store Situational Intelligence application that constantly engages users, devices, and systems across both physical and digital channels. Customers expect consistent, seamless experiences as they interact with businesses online, in store, and through mobile apps. They expect recognition, and insist on the freedom to browse, shop and buy in the way that is most convenient for them. The challenges with the traditional retail approach are:

- Inability to seamlessly connect users, devices, and things

- Lack of continuous, intelligent event management

- Limited personalization and recommendations

- Fragmented systems that can’t support digital customer demands and business requirements

- Limited/non-existent system wide view of real-time situations

A real-time approach is needed to integrate cloud, and IoT to support real-time actions in a distributed and dynamic environment with the latest artificial intelligence solution set. If the retailer can deliver a customer experience that is seamless and personalized, then they will be better equipped to grow and build lasting relationships with customers and users. With the ability to quickly connect new digital ecosystems and support distributed IoT requirements, the business is positioned to maximize revenue opportunities.

VANTIQ can create valuable collaborations of people, devices, and connected things with the flexibility needed to interact more effectively with customers/systems, personalize engagement, and drive a seamless omni-channel experience.

In any industry, the power of an amazing brand experience cannot be underestimated. A positive experience — seamless, relevant and consistent across all customer touch points — can turn a customer into a brand ambassador.

Today, 71 percent of consumers expect to view in-store inventory online, and 51 percent expect a retailer’s product offerings to be the same across multiple channels. Meanwhile, 50 percent of shoppers expect to be able to purchase a product online and then pick it up in-store.

However, only about a third of retailers have operationalized even the basics of in-store pickup and cross-channel inventory visibility. To be frank, the omni-channel, digital customer experience that today’s consumers demand exceeds the current IT capabilities of most retail companies. Any initiative to improve customer service goes beyond the creation of or redesign of front-end systems. Instead, it becomes an initiative to modernize applications, rationalize application portfolios, deploy new mobile capabilities, and rigorously test the resulting new environment.

In today’s consumer-driven world, customers expect to engage with a retailer on their own terms. Gone are the days of dictating a single, branded experience to customers en masse. Instead, we are entering a period of increasing personalization. This will require retailers to be proactive — by creating modern applications, placing them on a flexible infrastructure, and then deploying them across all channels. This is the only way to effectively deliver content that’s relevant across any device and environment.

VANTIQ provides a real-time experience that can be personalized to the needs and tastes of your organization. In order to achieve this, people must be connected with devices and things across a distributed ecosystem. This requires solving real-time event management challenges and creating solutions that enhance and improve customer experiences and at the same time maximize revenue opportunities.

VANTIQ is the application development environment that seamlessly integrates various event sources in an agile manner that lets organizations create and deploy new applications with unprecedented speed.

VANTIQ is proud to be a part of the Swiss CognitiveValley Movement – The Global AI Hub – that has kicked off successfully at EPFL -the École Polytechnique Fédérale de Lausanne- the research institute and university in Lausanne, Switzerland on September 17th 2019. More info on this event here.